The Semantic Model Is Having Its Moment

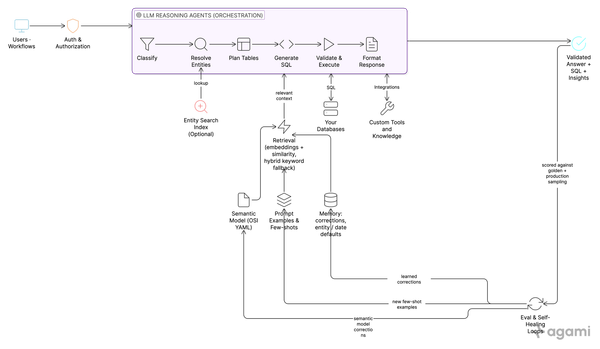

A decades-old BI concept just hit its all-time search peak. Every AI data agent in production now sits on top of a semantic model, and most are locked to one vendor's stack. Here is the history, the current landscape, and the open standard that breaks the lock-in.

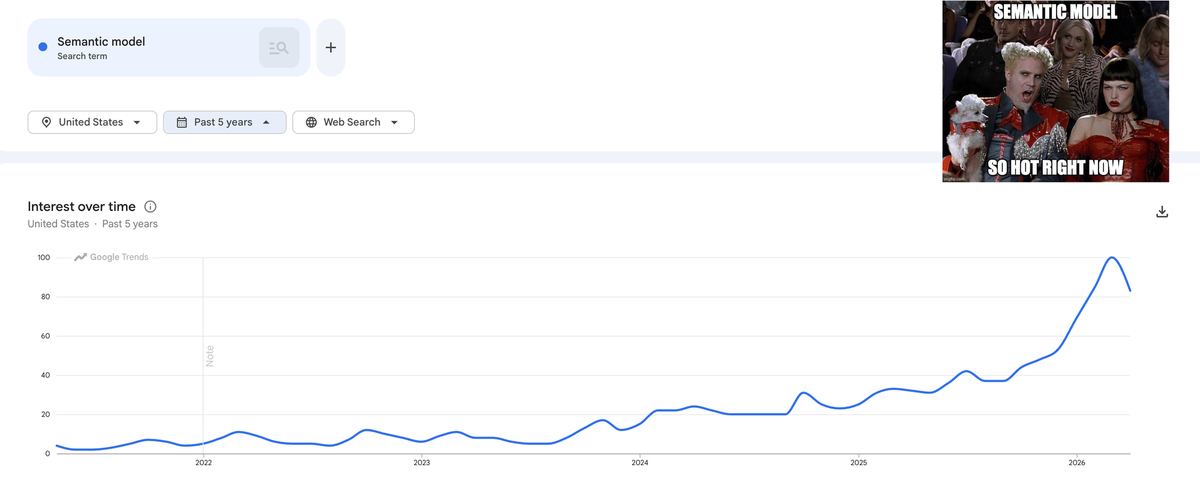

Search interest in "semantic model" just hit an all-time high. The reason is not nostalgia.

Every serious AI data agent in production now sits on top of one. Most teams are about to discover that the model they spend the next year authoring is locked to one vendor's stack.

TL;DR

- "Semantic model" search interest is at an all-time high in the United States [1]. Every AI data agent in production now needs one because LLMs writing SQL hallucinate without a governed metric definition.

- Every warehouse and every BI tool has shipped a semantic model layer paired with an AI agent in the last two years. Most are proprietary. Author your model in LookML and it does not work in Power BI.

- The Open Semantic Interchange (OSI) initiative was announced 23 September 2025 and the v1 spec was finalized 27 January 2026 under Apache 2.0 [2] [3]. Microsoft and Google are not members.

- At Agami, we consume OSI natively. You author once in OSI YAML, in your git repo. We point at the file. There is no Agami semantic model schema.

A long time ago, in a job far far away

At my first job, building educational software, I lived inside Crystal Reports and BusinessObjects. The "universe" I was authoring against was a semantic model in everything but name, and authoring it was GUI hell.

That universe is back. Every warehouse, every BI tool, and every AI data agent in 2026 is built on top of one, and most teams have not realized it yet.

Two things shifted. AI data agents started needing semantic models the way self-driving cars need high-definition maps. And LLM-assisted authoring made building one go from months of analyst work to hours. The combination is why the search trend looks the way it does.

Three waves of semantic models

Wave 1: The GUI / universe era

Semantic models started as point-and-click artifacts inside thick desktop tools. BusinessObjects shipped its "universe" concept in the mid-1990s and built an empire around it. Cognos Framework Manager arrived inside Cognos 8 BI in 2005. Microsoft Analysis Services landed in 2000 and put OLAP cubes on every enterprise developer's machine. Crystal Reports was the canonical reporting experience layered on top.

What every Wave 1 tool had in common: the model lived inside one vendor's reporting suite. The semantic layer was a feature of the BI tool, not a portable artifact. If you wanted to use the model elsewhere, you re-built it elsewhere.

Wave 2: The code / git era

Looker, founded in 2012, reinvented the category by treating semantics as version-controlled code. LookML moved the model out of the GUI and into a git repo. Engineers could review semantic changes in a pull request the same way they reviewed application code. dbt followed in 2023 with the Semantic Layer powered by MetricFlow, putting the metrics definition in the same repo as the transforms that produced the underlying tables. MetricFlow itself is Apache 2.0 [10].

Wave 2 fixed the workflow. Engineers as authors, git as the change management system. But each tool still shipped its own DSL. LookML did not run in dbt. dbt MetricFlow did not run in Cube. The lock-in moved from the GUI to the file format.

Wave 3: The AI era

LLMs broke the old equilibrium. The semantic model stopped being a BI artifact and became an agent grounding artifact. Every vendor noticed in the same eighteen months.

Snowflake shipped Semantic Views and pointed Cortex Analyst at them [5]. Databricks shipped Unity Catalog Metric Views and pointed AI/BI Genie at them [6]. Tableau (Salesforce) acquired Waii and built Tableau Next on top [8]. Microsoft renamed datasets to Power BI Semantic Models and pointed Copilot at them [9]. ThoughtSpot announced Spotter Semantics [12]. Looker rebranded its agent suite as Looker BI Agents at Next '26 [7]. Cube unified its data modeling and agent product into Cube Agentic Analytics [11].

The Open Semantic Interchange (OSI) initiative was announced 23 September 2025 by Snowflake, Salesforce, dbt Labs, BlackRock, and RelationalAI as a vendor-neutral specification for exchanging semantic metadata. The v1 spec was finalized 27 January 2026 under Apache 2.0 [2] [3]. The repo is at github.com/open-semantic-interchange/OSI [4].

What a semantic model actually contains

If you have not authored one recently, the building blocks are worth a refresh. A modern semantic model captures:

- Entities and tables. The grain of the model. Customer, order, subscription, incident. Each maps to a physical table or view.

- Dimensions. The attributes used to slice, group, and filter. Region, product category, customer segment, account stage.

- Measures and metrics. The numbers, with the actual business definition embedded. ARR is the sum of monthly recurring values from active subscriptions multiplied by 12, with filters on status and line item type to exclude one-time fees and pending cancellations. That sentence lives in the model, not in tribal knowledge.

- Relationships and joins. How entities connect, and on which keys. Defined once so the agent does not invent them.

- Time grains and a fiscal calendar. So "Q4" means the same thing for finance, sales, and ops, regardless of fiscal versus calendar year.

- Row-level security hooks. The semantic model declares which user attributes scope which tables. Enforcement should live in the database (Snowflake row access policies, Postgres RLS, BigQuery row-level security) so a curious user with direct SQL access cannot bypass it. The semantic layer's job is to know which database policy applies to which question, and to refuse to generate SQL that ignores it.

- Hierarchies and drill paths. Country to state to city to store. Controlled exploration instead of random spelunking.

Each one of these is a place an AI agent would otherwise hallucinate. The semantic model removes the choice.

Two things changed at once

Why every team suddenly needs one

LLMs are very good at writing SQL. They are not good at knowing which of six revenue definitions your CFO uses. Six versions of revenue across six dashboards is a CFO problem in BI. It is a hallucination problem in AI. Every serious agent in production now sits on top of a semantic model, including the in-house agents at OpenAI, Meta, and Notion. There is no shortcut.

Why every team can suddenly build one

Authoring used to take months. An analyst would interview stakeholders, document business rules, and hand-write YAML or LookML or DAX. LLM-assisted database introspection has compressed that to hours. Snowflake calls its version Semantic View Autopilot. Databricks shipped Genie Code. The category-wide pattern is the same: connect a database, the LLM proposes the model, an analyst edits, you ship. The bottleneck moved from labor to taste.

What an OSI semantic model looks like

Here is the open standard in practice. This is the first 20 lines of the TPC-DS retail example shipped in the OSI repository [4]:

# yaml-language-server: $schema=../core-spec/osi-schema.json

# TPC-DS Semantic Model Example

# This example demonstrates the OSI Core Metadata Spec using the TPC-DS benchmark schema

# TPC-DS is a decision support benchmark with a realistic retail business model

version: "0.1.1"

semantic_model:

- name: tpcds_retail_model

description: TPC-DS retail semantic model for sales and customer analytics

ai_context:

instructions: "Use this semantic model for retail analytics. It provides comprehensive

sales, customer, product, and store data from the TPC-DS benchmark. The model

supports time-based analysis, customer segmentation, product performance, and

store operations metrics."

datasets:

# Fact table: Store sales transactions

- name: store_sales

Full example at github.com/open-semantic-interchange/OSI/blob/main/examples/tpcds_semantic_model.yaml (the example file uses the 0.1.x schema; v1 was finalized 27 January 2026). Founding members include Snowflake, Salesforce, dbt Labs, BlackRock, and RelationalAI, with 39+ partners signed on [3].

Catalogs joined too. Atlan, Alation, Collibra, DataHub, and Select Star sit upstream of the semantic model. They inventory data assets, track lineage, and curate the glossary terms a semantic definition references. They are not a substitute for a semantic model; they are a feeder layer. OSI gives them a portable target to feed into.

That is the open story. The other half of the picture is what every vendor is doing inside their own walls.

The vendor landscape

Eight vendors. Eight semantic models. Eight AI agents. Most use a flavor of YAML. None of them talk to each other.

| Vendor | Semantic model | AI agent | Format | Spec license | OSI member? |

|---|---|---|---|---|---|

| Looker (Google) | LookML | Looker BI Agents (Conversational Analytics, Dashboard Agents) | LookML DSL | Proprietary | No (building competing "Open SQL Interface" [14]) |

| Power BI (Microsoft) | Power BI Semantic Models (Tabular, DAX, TMDL) | Copilot in Power BI / Fabric | TMDL | Proprietary | No [13] |

| Tableau (Salesforce) | Published Data Sources + Waii knowledge graph | Tableau Next (Waii-powered), Tableau Pulse, Agentforce | .tds + Waii graph | Proprietary | Yes (founding) |

| Snowflake | Semantic Views | Cortex Analyst (with Semantic View Autopilot) | YAML | Proprietary | Yes (founding) |

| Databricks | Unity Catalog Metric Views | AI/BI Genie (with Genie Code) | YAML | Spark engine Apache 2.0; Metric Views spec Databricks-specific | Yes (joined at spec finalization) |

| dbt Labs | Semantic Layer (MetricFlow) | dbt Analyst Agent (beta) | YAML | Apache 2.0 (engine and spec) | Yes (founding) |

| Cube | Cube Schema | Cube Agentic Analytics (was Cube D3) | YAML / JS | Apache 2.0 (Cube Core) | Yes |

| ThoughtSpot | TML, Spotter Semantics | Spotter | TML | Proprietary | Yes |

| Agami | OSI-native (programmatically transformable to any format) | LLM Assistant native (Claude Code, Cursor, ChatGPT) | OSI YAML | Apache 2.0 (consumes OSI spec) | No (not a member; consumes OSI natively) |

Look down the "Spec license" column, then the "OSI member?" column. Most rows say proprietary, and the loudest holdouts on standards (Microsoft, Google) are not at the table. Lock in dressed in YAML.

Two patterns are worth flagging. Databricks joined OSI at spec finalization, four months after the launch announcement, rather than as a founding member. That is a tell about how the standards conversation is going. And Google is shipping a competing standard called Open SQL Interface with Mode and SAP Analytics Cloud [14], which suggests the war over the open semantic format is not yet over.

Agami's approach: OSI-native and LLM-Assistant-native

You author your semantic model once, in OSI YAML, and it lives in your git repo alongside your dbt models, your dashboards, and your analytics code. You point Agami at the file. Agami consumes it directly.

Authoring the OSI YAML is not something you have to do alone. Agami ships skills that bootstrap the model from three signals at once: introspection of your live database schema, LLM-assisted inference of joins and metrics, and any glossary, semantic catalog, or business-rule documents your team already maintains. Schema to production semantic model in hours.

There is no proprietary middle format you have to translate to and from. There is no Agami semantic model schema. OSI is the schema.

If you ever want to use that same model in another OSI-aligned tool, you can. OSI's converter ecosystem ships for Snowflake and Salesforce today. dbt and Databricks have spec-level support but no converter has shipped yet, and LookML, Cube, and MetricFlow are not yet covered. The hub-and-spoke architecture means each new converter unlocks every other vendor for free.

The other half of the stance: Agami runs as a skill+MCP solution inside the LLM assistant your team already uses. Claude Code, Cursor, ChatGPT, your IDE. There is no new BI tool to log into, no separate UI to learn. The agent meets your data team where they already work, and your business users in the channels they already use. Every other vendor in the table above ships an AI agent that lives inside their own product. Agami is the one that lives inside yours.

The point

The semantic model belongs to you, not to the vendor that hosts your data this quarter. If you are picking a stack now, pick the one that consumes the open standard natively, and skip the translation tax.

Want to follow along? Subscribe and you'll get each post as it ships.

Want to see it on real data? Book a demo and we'll run Agami against your enterprise data.

References

- Google Trends, "semantic model," United States, past 5 years. https://trends.google.com/trends/explore?date=today%205-y&geo=US&q=semantic%20model

- Open Semantic Interchange initiative announcement, Snowflake press release, 23 September 2025. https://www.snowflake.com/en/news/press-releases/snowflake-salesforce-dbt-labs-and-more-revolutionize-data-readiness-for-ai-with-open-semantic-interchange-initiative/

- Open Semantic Interchange v1 specification finalized, Snowflake blog, 27 January 2026. https://www.snowflake.com/en/blog/open-semantic-interchanges-specs-finalized/

- Open Semantic Interchange GitHub repository and TPC-DS example. https://github.com/open-semantic-interchange/OSI

- Snowflake Semantic Views overview documentation. https://docs.snowflake.com/en/user-guide/views-semantic/overview

- Databricks, "Redefining Semantics: Data Layer for the Future of BI and AI." https://www.databricks.com/blog/redefining-semantics-data-layer-future-bi-and-ai

- Google Cloud, Looker updates for agentic BI at Next '26. https://cloud.google.com/blog/products/business-intelligence/looker-updates-for-agentic-bi-at-next26

- Salesforce signs definitive agreement to acquire Waii (August 2025). https://www.salesforce.com/news/stories/salesforce-signs-definitive-agreement-to-acquire-waii/

- Microsoft, Copilot in Power BI introduction. https://learn.microsoft.com/en-us/power-bi/create-reports/copilot-introduction

- dbt Labs, MetricFlow open-sourced under Apache 2.0 (Coalesce 2025). https://www.getdbt.com/blog/open-source-metricflow-governed-metrics

- Cube Agentic Analytics announcement. https://cube.dev/blog/cube-agentic-analytics

- ThoughtSpot introduces Spotter Semantics, March 2026. https://www.globenewswire.com/news-release/2026/03/12/3254770/0/en/ThoughtSpot-Introduces-Spotter-Semantics-to-Bring-Trust-and-Context-to-Enterprise-AI.html

- CrossJoin analysis: Power BI and support for third-party semantic models, April 2026. https://blog.crossjoin.co.uk/2026/04/12/power-bi-and-support-for-third-party-semantic-models/

- Google Cloud, "Opening up the Looker semantic layer" (competing Open SQL Interface). https://cloud.google.com/blog/products/business-intelligence/opening-up-the-looker-semantic-layer

- Prior Agami blog, "Buy a CRM Subscription. Own Your Data Agent." https://blog.agami.ai/dont-build-your-own-crm-build-your-own-data-agent/

About the author

Sandeep Kachru is the co-founder of Agami AI. Before Agami, he spent over a decade building data platforms at Google, Meta, and Airtable.