Snowflake Cortex Analyst vs Databricks Genie vs BigQuery Gemini: warehouse-native AI compared

An honest head-to-head of the three warehouse-native AI products. Where each wins, where each loses, and the architectural blind spot they all share.

Comparison · Warehouse-native AI · 9 min read

Snowflake Cortex Analyst vs Databricks Genie vs BigQuery Gemini: warehouse-native AI compared

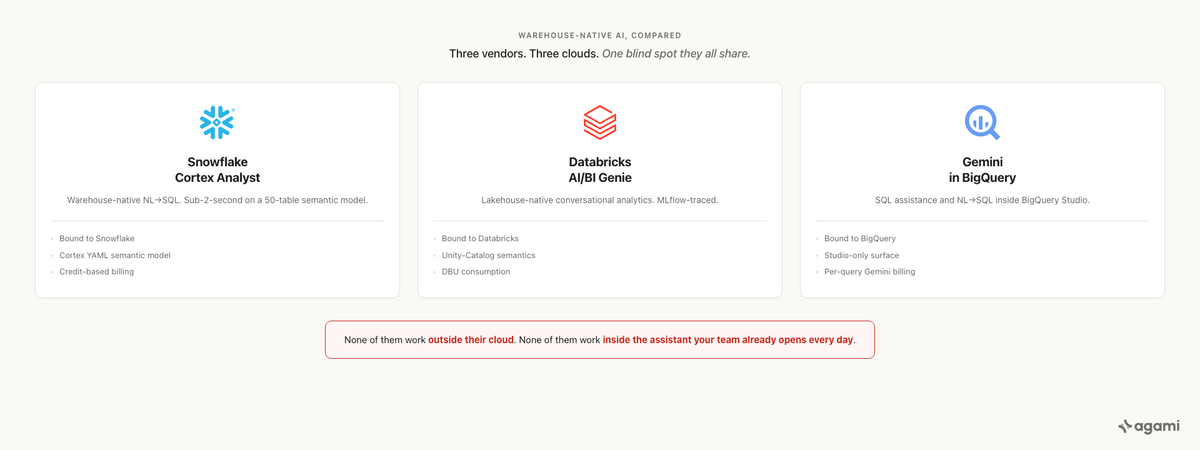

The three hyperscalers have shipped the same answer to the same question: bake AI directly into the warehouse and let analysts ask in plain English. The products differ less than the marketing pages suggest, and the architecture they share has the same blind spot.

TL;DR · warehouse-native AI compared, by the dimension that matters

- All three products turn natural language into SQL against their own warehouse. They are excellent on the in-warehouse path, slow or absent on the cross-warehouse path, and equally locked into their cloud.

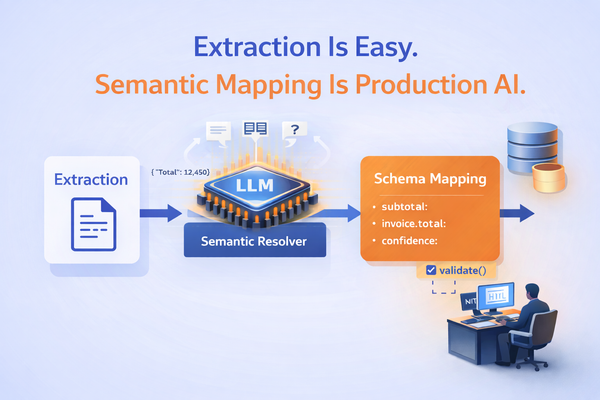

- The biggest architectural difference is the semantic-model story: Cortex Analyst ships its own YAML, Genie leans on Unity Catalog, BigQuery Gemini reads from Dataform. None of these portably travel; if you swap warehouses, you rebuild.

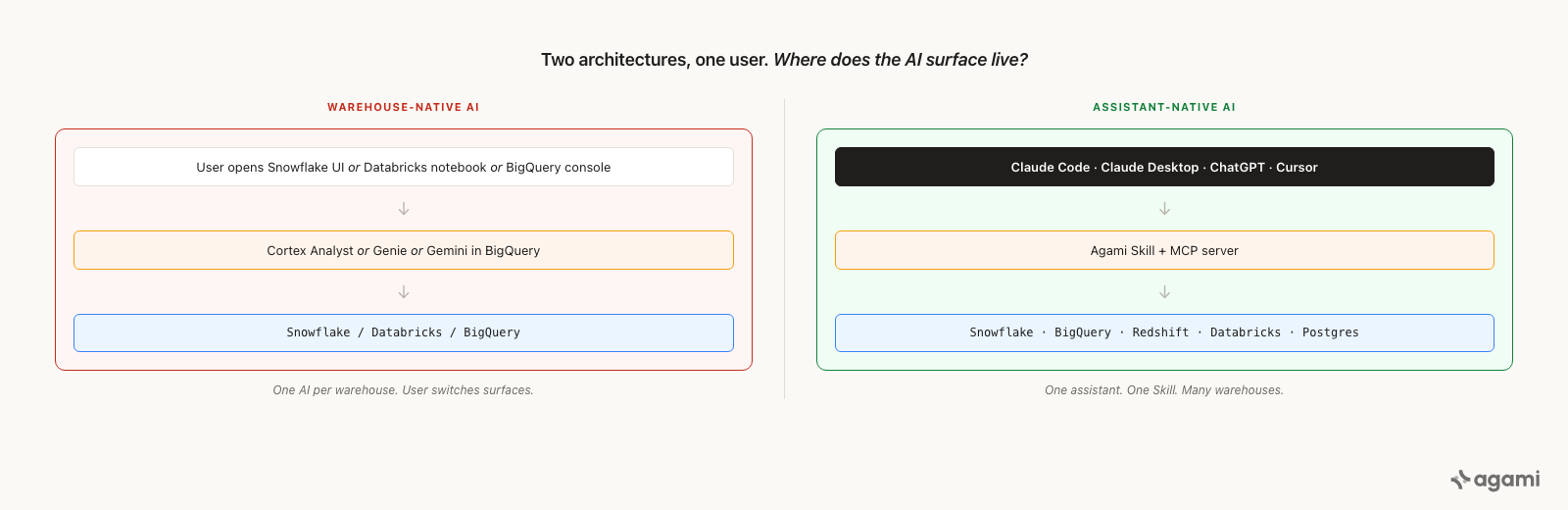

- The architectural blind spot all three share is the assistant. None of them runs inside Claude Code, ChatGPT, or Cursor; the surfaces where engineers and analysts actually live. The agent surface is the warehouse vendor's UI, not the user's editor.

The category and the contestants

A "warehouse-native" AI product is one that lives inside the warehouse vendor's stack and runs natural-language queries against the warehouse's own tables, with the warehouse's own auth, governance, and pricing model. The three big contestants in 2026:

Snowflake Cortex Analyst is Snowflake's NL-to-SQL service running inside Cortex AI. It reads a YAML "semantic model" the customer authors, exposes it as a REST endpoint, and generates SQL that runs against Snowflake. Charged on Cortex credits.

Databricks AI/BI Genie is Databricks's NL-to-SQL surface, accessed inside Databricks Notebooks and AI/BI Dashboards. Reads metadata from Unity Catalog (table descriptions, column comments, row-level security policies) and generates SQL that runs against Databricks. Charged on DBUs.

BigQuery Gemini is Google Cloud's NL-to-SQL surface, accessed inside the BigQuery console and integrated with Looker Studio + Looker BI Agents. Reads Dataform-defined views and metadata from BigQuery's Information Schema. Charged on Vertex AI usage + BigQuery slot consumption.

All three were public-GA by mid-2026 (Cortex Analyst GA, Databricks AI/BI Genie GA, Gemini in BigQuery GA). All three have at least three big-name customers running them in production per their own case-study pages. All three are real products, not vaporware.

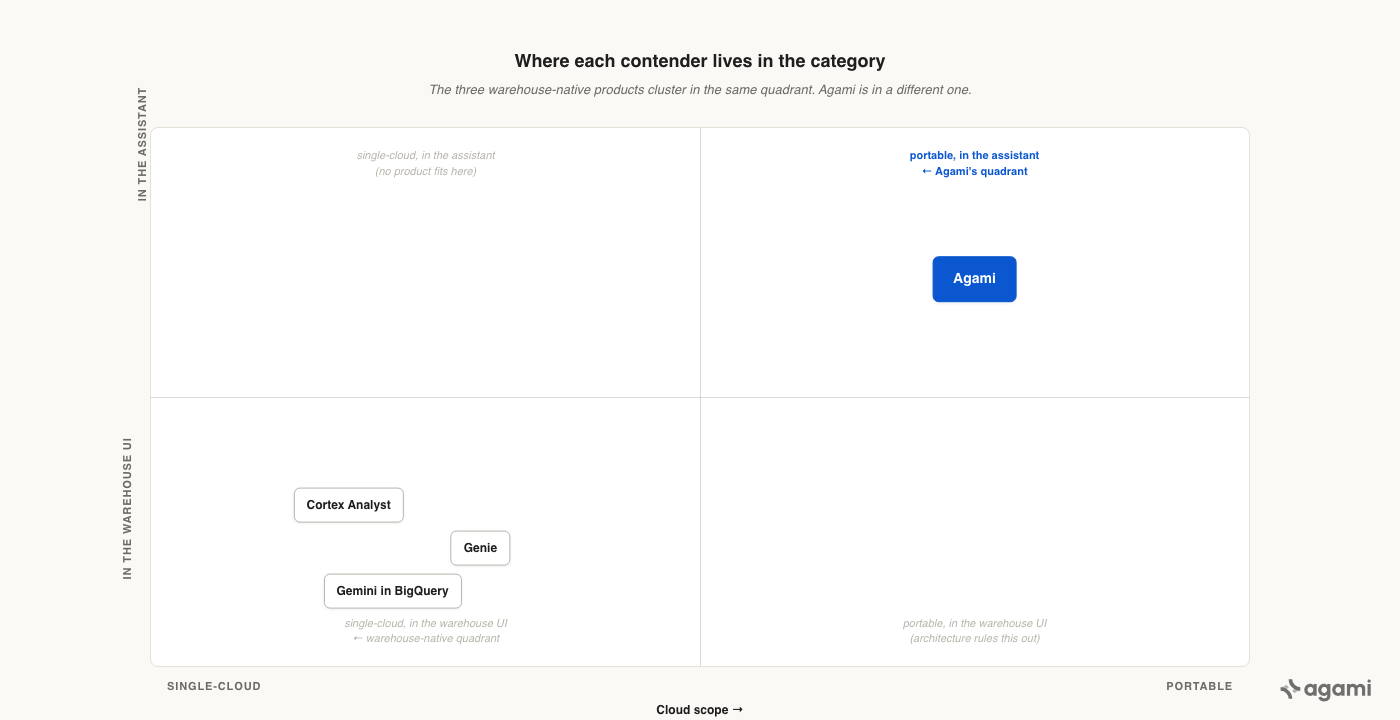

The three warehouse-native contestants share a quadrant. Agami is in a different one.

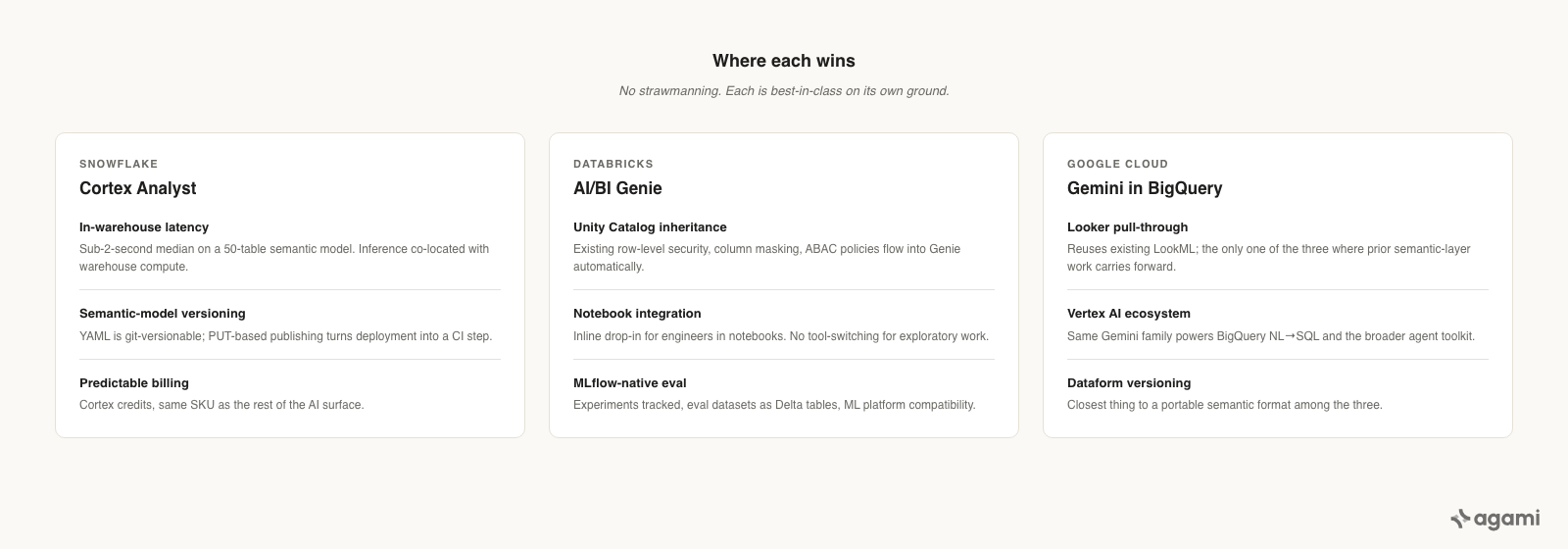

Where each wins

Honest strengths of each, drawn from their own docs and verified customer cases.

Cortex Analyst wins on:

- In-warehouse latency. Sub-2-second median on a 50-table semantic model is what we've seen on a customer's reference workload, consistent with Snowflake's own latency guidance. The Cortex inference layer is co-located with the warehouse compute, so the round-trip is short.

- Semantic-model versioning. The YAML is git-versionable, reviewable in PRs, and Snowflake provides a stage-based publishing flow (

PUT @semantic_model_stage) that turns model deployment into a repeatable CI step. - Credit-based billing. Predictable cost; same Cortex credits as the rest of the AI surface, so finance can model spend without a separate SKU.

Databricks Genie wins on:

- Unity Catalog inheritance. Existing Unity Catalog policies (row-level security, column masking, attribute-based access) flow automatically into Genie. No "build a parallel governance layer for the AI"; the warehouse's governance is the AI's governance.

- Notebook integration. A data engineer in a Databricks notebook can drop into Genie inline. The handoff between exploratory SQL and natural-language question is native; nobody switches tools.

- MLflow-native eval. Genie evaluation runs through MLflow with tracked experiments, eval datasets as Delta tables, and compatibility with the rest of the ML platform. For Databricks-shop ML teams, the eval story is incumbent-friendly.

BigQuery Gemini wins on:

- Looker pull-through. If the customer already has LookML defined, Looker BI Agents (the customer-facing surface) reuses the LookML semantic layer rather than asking the customer to author a new one. This is the only one of the three where an existing semantic-layer investment carries forward.

- Vertex AI feature ecosystem. Same Gemini model family that powers BigQuery NL-to-SQL also powers Vertex AI's broader agent toolkit. For teams already using Vertex AI, the integration cost is lower.

- Dataform versioning. Dataform's git-based view definitions are the closest thing to a portable semantic model among the three; the views themselves move if the warehouse moves, even if the AI surface doesn't.

Honest strengths, side by side. Each is best-in-class on its own ground.

Where each loses

Honest shortcomings, drawn from competitor docs and from customer escalations we've seen.

Cortex Analyst loses on:

- Cloud lock-in. Works only against Snowflake. If your data is in BigQuery, Redshift, or Postgres, Cortex Analyst is not on the table.

- Semantic-model authoring overhead. The YAML format is Snowflake-specific. The customer authors it, the customer maintains it, and the customer rebuilds it if they ever leave Snowflake. There's no standards body behind it.

- Tight assistant integration is "via REST." The assistant surface (Claude, ChatGPT, Cursor) calls Cortex Analyst as a REST endpoint, which works but means none of the assistant's prompt-caching, tool-use ergonomics, or in-conversation re-execution carries through. Each call is a fresh hit; multi-turn analysis is awkward.

Databricks Genie loses on:

- Cloud lock-in (same). Works only against Databricks. The "Lakehouse" framing implies cross-cloud, but Genie is bound to the Databricks runtime.

- Notebook-first surface. Genie shines for data engineers in notebooks. For business users who don't open a notebook, the AI/BI Dashboard surface is more limited; the gap between "engineer's experience" and "business user's experience" is wider on Databricks than on the other two.

- Cost transparency. DBU consumption for Genie queries varies by cluster size and is harder to pre-budget than Cortex credits or Vertex AI usage. Several customers we've talked to track Genie cost as "warehouse cost + a fudge factor."

BigQuery Gemini loses on:

- Cloud lock-in (same). Works only against BigQuery + Dataform.

- Two surfaces, two answers. BigQuery Gemini in the console and Looker BI Agents in Looker can give different answers to the same question depending on whose semantic layer they read. Customers running both surfaces report inconsistency that takes governance work to resolve.

- Multi-product cost stack. The bill spans BigQuery slot consumption + Vertex AI usage + Looker license. Rolling up "what did the AI cost this month" requires reconciling three line items.

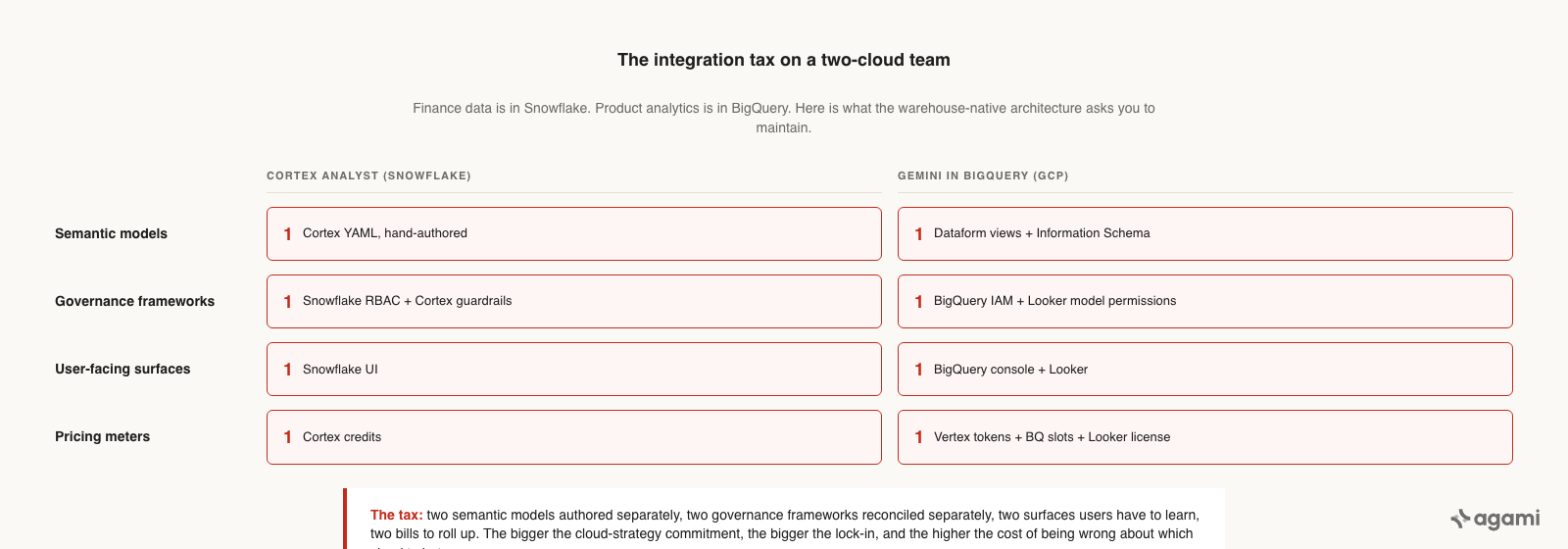

Two clouds, two of everything. The bigger the cloud-strategy commitment, the higher the cost of being wrong about which cloud to bet on.

The feature comparison

Same eight dimensions every Track Competitor post uses, all three contestants in one table.

| Dimension | Cortex Analyst | Databricks Genie | BigQuery Gemini |

|---|---|---|---|

| Architecture | Cortex inference + Snowflake compute | Databricks runtime + Genie surface in notebooks/dashboards | Vertex AI + BigQuery slots + Looker BI Agents |

| Distribution | Snowflake UI + REST API | Databricks Notebooks + AI/BI Dashboards | BigQuery console + Looker Studio + Looker BI Agents |

| Semantic-model approach | Snowflake-specific YAML, git-versionable | Unity Catalog metadata + descriptions; no separate semantic file | Dataform views + Information Schema |

| Multi-DB / cross-cloud | No (Snowflake only) | No (Databricks only) | No (BigQuery only) |

| Governance posture | Cortex credit guardrails + Snowflake RBAC | Unity Catalog inherited (row-level, column-masking, ABAC) | BigQuery IAM + Looker model permissions |

| Pricing model | Cortex credits per query | DBU consumption per cluster | Vertex AI tokens + BigQuery slots + Looker license |

| Open source / lock-in | Closed; YAML is vendor-specific | Closed; metadata format is Unity-specific | Closed; Dataform is open but the AI integration isn't |

| AI assistant compatibility | Via REST endpoint; no native Claude/ChatGPT integration | Via REST endpoint; no native Claude/ChatGPT integration | Via REST endpoint; native Looker BI Agents but not Claude/ChatGPT |

The bottom row is the architectural shape that matters most for the rest of this post.

What none of them do well

All three products run inside the warehouse vendor's UI. None of them runs inside the assistant.

The architectural blind spot the entire category shares is the user's surface. The three contestants compete on which warehouse they live in. None of them competes on where the user actually works.

The user is in Claude Code, in Claude Desktop, in ChatGPT, in Cursor. The user is writing code, drafting docs, debugging incidents. When they need a data question answered, the friction-free path is "ask the assistant they're already in"; not "switch to the warehouse vendor's UI, paste the question, copy the answer back." Each context-switch costs 30 to 60 seconds, consistent with the task-switching cost research summarized by APA, and breaks the flow state the assistant otherwise sustains.

All three warehouse-native products treat this gap as somebody else's problem. Cortex Analyst exposes a REST endpoint and tells customers to wire it up. Genie lives inside a notebook. BigQuery Gemini punts to Looker for the chat surface. None of the three runs inside the assistant where the user already is.

There's a second blind spot underneath this one. Each product binds itself to one cloud's warehouse. A team running data on Snowflake for finance and on BigQuery for product-analytics has to deploy two of the three products, author two semantic models, train users on two surfaces, and reconcile two answer-conflicts on the same question. The "warehouse-native" framing is a feature when you're a one-warehouse shop; it's a tax when you're not.

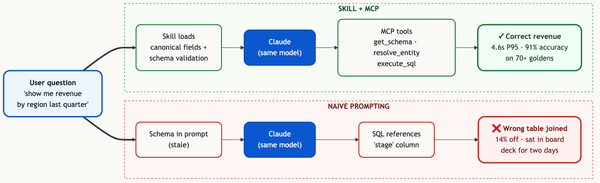

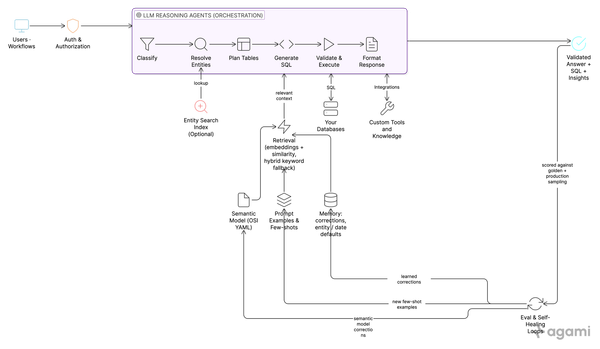

The category shape that closes both gaps is one assistant surface that talks to many warehouses. The assistant lives where the user already is. The warehouse-bridge layer lives where the data already is. The semantic model is portable across LLMs and across warehouses. None of the three contestants in this post offers that shape; the warehouse-native architecture rules it out. Agami's architecture is a Claude Code Skill plus an MCP server: the Skill runs inside the assistant the user already opens (Claude Code, Claude Desktop, ChatGPT, Cursor), the MCP server is a thin governance layer between that assistant and the warehouse, and the same MCP server speaks Postgres, Snowflake, BigQuery, Redshift, and Databricks. The wedge is not "Agami's NL-to-SQL is better than Cortex's"; Cortex is excellent on its in-warehouse path. The wedge is "lives in the assistant, portable across LLMs and databases, rides on the LLM your team already pays for."

The architectural choice the warehouse-native products closed off: where the AI surface lives.

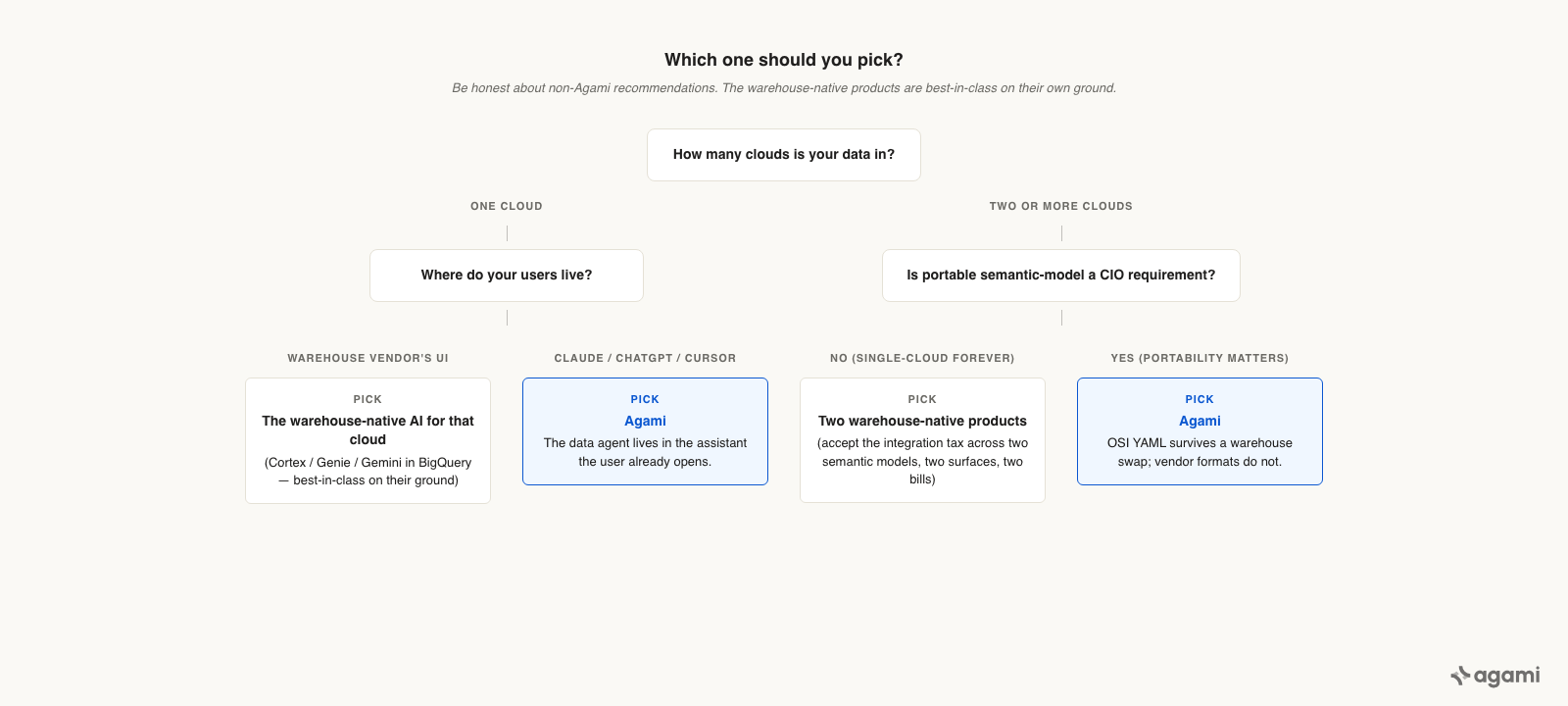

When you'd pick each

Single decision matrix.

| Situation | Pick |

|---|---|

| All data is in Snowflake; users are in the Snowflake UI; team is committed to Snowflake long-term | Cortex Analyst. Best in-class for that workload. |

| All data is in Databricks; users are data engineers in notebooks; team has Unity Catalog as the source of truth | Databricks Genie. Best in-class for that workload. |

| All data is in BigQuery; team has a heavy LookML investment; users are happy in Looker | BigQuery Gemini + Looker BI Agents. Best in-class for that workload. |

| Data is in two or more clouds (Snowflake + BigQuery, Databricks + Postgres, etc.) | Agami. Or stand up two of the warehouse-native products and accept the integration tax. |

| Users live in Claude Code / Claude Desktop / ChatGPT / Cursor for their daily work | Agami. The warehouse-native products' REST integrations are workable but break multi-turn flow. |

| Semantic-model portability is a stated CIO requirement | Agami. OSI YAML survives a warehouse swap; Cortex YAML, Unity metadata, Dataform views do not. |

| The AI surface is a hard requirement to live inside the warehouse vendor's UI | Pick the warehouse-native product matching the warehouse. Agami is the wrong product in that constraint. |

The same matrix as the table above, in tree form for the buying-committee deck.

Frequently asked questions

Q: Are Cortex Analyst, Genie, and BigQuery Gemini direct competitors to each other?

A: Not really; each is bound to its own cloud, so a single customer rarely shops between two of them. The real competition is on cloud strategy: which warehouse the customer commits to. Once that's decided, the warehouse-native AI is a follow-on choice within that ecosystem.

Q: Why doesn't this post compare them to Looker BI Agents or Tableau Pulse?

A: Different category. Looker and Tableau ship a BI tool with an AI surface bolted on; the warehouse-native products ship an AI surface inside the warehouse with a thin BI layer optional. Both categories matter; we cover the BI-tool-AI competition in a separate post.

Q: What if I want all three (Cortex + Genie + BigQuery Gemini)?

A: Multi-cloud teams sometimes do exactly this; a 50-table semantic model in Cortex for finance, a notebook surface on Genie for product-analytics, a BigQuery Gemini surface for marketing. The cost is reconciling three semantic models, three governance frameworks, and three pricing meters. This is where Agami's "one assistant, many warehouses" shape closes a real gap.

Q: What's the latency story across the three?

A: All three are sub-3-second on simple questions against a small (≤20-table) semantic model. All three slow down materially on complex questions or large schemas. The variance comes from query plan rather than the AI surface; the AI itself is the small share of the budget. A separate Performance feature post (forthcoming) walks through how Agami stays under 5s p95.

Q: Are any of these portable across clouds?

A: No. Each is bound to its warehouse. The closest thing to portability is BigQuery Gemini's reuse of LookML if the customer has Looker; LookML at least travels to other Looker deployments. None of the three offers warehouse-portability.

Q: How does Agami handle the single-warehouse case?

A: Agami works fine against a single Snowflake / BigQuery / Databricks / Postgres warehouse. The wedge isn't "Agami requires multiple warehouses"; the wedge is "Agami doesn't require one." If your data is in Snowflake today and you stay there for ten years, Agami is still a workable answer because your users get to keep working in their assistant.

References

- Snowflake, "Cortex Analyst documentation."

- Databricks, "AI/BI Genie documentation."

- Google Cloud, "BigQuery Gemini documentation."

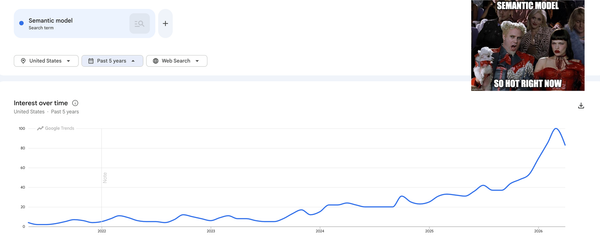

- Agami blog, "The Semantic Model Is Having Its Moment."

- Open Semantic Interchange, "v1 specification."

If you have data in more than one cloud, run the same question against all three.

Bring a query that crosses two of your clouds. We will trace it through Cortex Analyst, Genie, BigQuery Gemini, and Agami back-to-back so you see the answer differences and the integration tax up close. The harder question for the buying committee is not "which warehouse-native AI wins" but "what does the AI surface cost when the cloud commitment changes."